The Vibe Check. What This Issue Feels Like

High Tech Tea - Made by Jeneba in Gemini

Letter From The Editor

AI moves at the speed of thought. Our legal frameworks remain anchored in the twentieth century. And the friction between these two worlds has reached a fever pitch.

Here’s What Happened This Week In AI : Three stories that I can't stop thinking about:

The Model Wars: OpenAI vs. Anthropic The AI girls are fighting! This week featured major back-to-back releases of flagship models focused on coding and agentic workflows.

• OpenAI Launches GPT-5.3-Codex: OpenAI released its most capable coding model yet, GPT-5.3-Codex. It reportedly sets new highs on benchmarks like SWE-Bench Pro (57%) and Terminal-Bench 2.0 (77%). Notably, OpenAI revealed that early versions of this model were used to identify bugs in its own training runs, effectively closing the feedback loop where AI helps build better AI. The model was also flagged with a "High" cybersecurity risk rating. Basically, AI being used to build itself.

• Anthropic Releases Claude Opus 4.6: Anthropic countered with Opus 4.6, a model designed for enterprise knowledge work with a 1 million token context window. Key features include "agent teams" in Claude Code, allowing multiple specialized agents to parallelize tasks, and deep integrations with Microsoft Office (PowerPoint and Excel) to build decks and models natively.

Perplexity's Model Council: Perplexity launched a feature called Model Council, allowing users to query three frontier models (e.g., Opus 4.6, GPT-5.2, Gemini 3) simultaneously. A synthesizer model then resolves conflicts to provide a single, higher-confidence answer.

AI For The Rest Of Us: Here’s What All That Means:

Team OpenAI: The "Self-Building" Speed Demon

OpenAI just unleashed GPT-5.3-Codex, and it’s not just a coding tool; it’s an engineer that helped build its own house.

The Flex: For the first time, a model closed the feedback loop. OpenAI admitted early versions of 5.3 were used to debug and optimize the model’s own training runs.

The Stats: It’s crushing Terminal-Bench 2.0 (77%), basically proving it can navigate a computer better than most junior devs.

The Warning: It comes with a "High" cybersecurity risk rating. It's so good at finding exploits that OpenAI had to trigger special safety protocols just to launch it.

Team Anthropic: The "Vibe-Working" Strategist

Anthropic hit back with Claude Opus 4.6, focusing on deep context and "Coworker" vibes.

The Brain: It features a 1-million-token context window (Beta), meaning you can feed it a small library of technical manuals and it won't break a sweat

The Squad: It introduced "Agent Teams," where Claude can use multiple specialized sub-agents to parallelize your work (e.g., one writes the code, one writes the tests, one builds a landing page for you).

Office Goals: It’s now natively integrated with PowerPoint and Excel, meaning it doesn't just "talk" about data; it builds the deck for your Monday morning meeting while you’re at brunch.

The Wildcard: Perplexity’s "Model Council"

Tired of picking a side? Perplexity just launched Model Council.

The Play: It lets you query Opus 4.6, GPT-5.2, and Gemini 3 simultaneously.

The Synthesizer: A separate "judge" model then reviews all three answers, highlights where they disagree, and gives you a single, high-confidence verdict. It’s like having a boardroom of experts where you only have to talk to the Chairman.

Which one are you tesing this week?

🏗️ Why It Matters (The "Hard Hat" View)

This isn't just a benchmark war; it's an Infrastructure Revolution.

SaaS-pocalypse: Anthropic’s new legal and office tools wiped nearly $1 trillion off software stocks in a week. Why pay for 10 different subscriptions when one "Agent Team" can do it all?

Human-in-the-Lead: We are moving into the "Vibe Working" era. Your job is no longer to do the work, but to orchestrate the agents who do.

The Department of Transportation is using Google's Gemini to draft federal regulations in under 20 minutes. The stated goal? Use AI-generated rules to eliminate 50% of federal regulations. Yikes.

The Department of War released a memo declaring "speed wins" as official policy for military AI , explicitly stating that "the risks of not moving fast enough outweigh the risks of imperfect alignment."

The Moltbot scandal showed what happens when individuals hand root access to a viral AI agent without any threat modeling. Hundreds of control panels exposed to the open internet. Private DMs and API keys leaked globally. Because people substituted social media for good judgment.

Meanwhile, America’s national power grid, overburdened by the insatiable energy demands of AI data centers, is reaching its structural limits. And 45 states have introduced AI bills to create guardrails that federal policy isn't providing.

This is no longer just a story about the best prompts or smarter chatbots.

It's a story about governance debt.

Every organization from federal agencies to individual users is accumulating governance debt when they prioritize speed over judgment, vibes over infrastructure, hype over accountability.

The question I keep coming back to: In a world of 20-minute regulations and root-access-on-demand, what's the most valuable, what has meaning, and what’s a commodity?

It's not speed. It's not even the AI itself.

It's judgment and taste. The human capacity to discern things and know when to move and when to pause. When to trust and when to verify. When to automate and when to stay in the lead.

That's what this issue is about. Le't’s get into it.

— Jeneba 👩🏾💻

Fractional AI Advisor | AI Adoption Strategist | Your guide from vibes-based AI to governed, human-led AI.

TL;DR - The Signals That Matter

5 Shifts Redefining Policy, Power, and Equity

My take as an AI literacy educator: This week's news isn't just information it's a map of where power is moving. If you understand these five shifts, you understand what's coming. And if you understand what's coming, you can position yourself accordingly.

Shift 1: "Good Enough" Governance — The 20-Minute Regulation

What happened: The Department of Transportation is using Google's Gemini to automate the drafting of federal regulations.

The context: This is the direct output of a strategy proposed by the Department of Government Efficiency (DOGE), which seeks to eliminate 50% of federal regulations through AI-driven rescissions.

The driver: A depleted workforce. Following significant reductions from Trump and Elon Musk, the DOT has lost nearly 4,000 employees, including over 100 veteran attorneys.

The strategy they're calling "Flood the Zone":

Now they claim AI can handle 80-90% of the drafting work

The objective has shifted from technical perfection to high volume

The administration is betting that "high-verisimilitude text" will overwhelm the sluggish bureaucracy

What no one is talking about:

Hallucination risk: Critics warn that relying on AI for air safety or hazardous material transport is "wildly irresponsible"

Sycophancy problem: AI tends to mirror the user's prompt rather than offer reasoned analysis

Legal question: Courts require "reasoned judgment" under the Administrative Procedure Act. Can a human "proofreading" a machine-generated product satisfy that standard?

"We don't need the perfect rule on XYZ... We want good enough. We're flooding the zone." — Gregory Zerzan, DOT General Counsel

🧠 Here’s what I think:

This is exactly what I warn about in my AI adoption work: speed without judgment is dangerous.

When I led AI adoption for clients in the past, I learned that the organizations who succeed aren't the ones who move fastest. They're the ones who build governance into their workflows from day one. They ask: What needs human review? Where are the failure points? What happens when it's wrong?

"Good enough" works for low-stakes tasks. But air safety? Hazmat transport? These are domains where "good enough" can mean people die.

The irony chile: the same government that's supposed to regulate AI is now using AI to deregulate itself without the governance frameworks that responsible adoption requires.

This is what happens when you prioritize velocity over validity. When you measure success by volume, not by outcomes. When you treat AI as a replacement for expertise rather than an augmentation of it.

The question I keep asking: If we wouldn't accept "good enough" from a human attorney drafting these regulations, why would we accept it from an AI?

Shift 2: The Grid's Secret Weapon and AI and The Environment

What happened: AI and data center demand is projected to hit 92 GW by 2027. The power grid can't keep up. But the most innovative solution isn't building more plants, it's the strategic use of silence.

How it works: Instead of demanding 24/7/365 maximum power guarantees, data center operators are agreeing to short-duration curtailments of less than 50 hours per year. This "Flexible Load Management" allows utilities to integrate massive new loads without immediate infrastructure upgrades.

The tradeoff: In exchange for flexibility, data center operators receive "same-day interconnection approvals," bypassing a backlog that has historically delayed projects by years.

🧠 Here’s What I Think:

This is the part of the AI story that the tech industry doesn't want to talk about: physical infrastructure is the hard limit on AI growth.

We can build smarter models. We can optimize algorithms. We can throw more money at compute. But at the end of the day, electricity doesn't care how smart your AI is. 92 GW by 2027 is roughly equivalent to the entire electricity consumption of Germany. That's not a software problem — it's a physics problem.

What I find brilliant about "Flexible Load Management" is that it's governance at the infrastructure level. The companies willing to build flexibility into their models to accept that they can't run at 100% capacity 100% of the time will have a structural advantage.

This is a lesson that applies far beyond data centers: the organizations that succeed with AI aren't the ones that demand maximum output. They're the ones that understand constraints, context, downstream impact, and design around them.

Source: PPL Pennsylvania pilot program on flexible load integration; U.S. Department of Energy grid demand projections

Shift 3: Equity as Economic Infrastructure

What happened: The U.S. Black Chambers (USBC) released its "BLACKprint", a policy framework that positions Black business as the fundamental "spinal cord" of the American economy.

The shift: This is a move from traditional advocacy to systemic economic integration. The USBC is arguing that America's global competitiveness is tethered to the parity of its Black entrepreneurs.

The three pillars:

M&A as Wealth Creation: Moving beyond small-business support to focus on Mergers & Acquisitions as the primary engine for building generational wealth

Tax Code Preservation: Prioritizing the preservation of Section 199A the 20% deduction for pass-through entities that enables reinvestment in Black-owned firms

Re-entry Entrepreneurship: Viewing newly returning citizens as a critical labor and entrepreneurial resource, ensuring that systemic justice disparities don't remain a drag on national GDP

"America's success and Black business owners' success go hand in hand." — Ron Busby, Sr., President & CEO, USBC

🧠 Here’s What I Think:

This framework matters to me personally — as a Black woman building in the AI space, and professionally — as someone who studies how technology creates and distributes economic opportunity. I’ve been saying technology is the great equilizer for Black people and generating Black wealth.

The BLACKprint does something I've been arguing for years: it reframes Black economic participation from a diversity conversation to a competitiveness conversation.

What I appreciate most is the M&A focus. I've watched too many brilliant Black founders build incredible businesses only to hit a ceiling because they didn't have access to the same deal-making infrastructure that builds generational wealth for other communities. Grants help businesses survive. M&A helps businesses scale.

Source: USBC BLACKprint Policy Framework

Shift 4: The Great Legislative Spaghetti

What happened: While Washington pursues "brute-force" efficiency, the states are filling the vacuum with a dense, sophisticated patchwork of localized AI laws.

The numbers: At least 45 states have introduced AI bills in 2026.

Forward-leaning state governance:

State | Initiative |

|---|---|

Utah | "Learning Laboratory" program — controlled environment to assess AI risks in real-time |

Maryland | Dedicated AI Subcabinet overseeing procurement and ethical deployment across all state agencies |

Colorado | Mandating disclosures for "high-risk" systems to prevent algorithmic collusion and discrimination |

New Hampshire & South Dakota | Criminalizing AI-generated child pornography and deepfake fraud |

🧠 Here’s What I Think:

Here's what most people miss: the states are doing the governance work that the federal government won't.

While Washington is "flooding the zone" with AI-generated regulations (irony noted), 45 states are building actual guardrails. They're asking the questions that responsible AI adoption requires: What counts as high-risk? What disclosures should be mandatory? How do we test AI policy before it affects millions of people?

Utah's "Learning Laboratory" is particularly smart. It's essentially a sandbox for AI governance test, learn, adjust before you scale. That's the approach I advocate for in enterprise AI adoption, and it's encouraging to see it at the policy level.

Sources: NCSL AI Legislation Tracker; State government announcements

🫖 Shift 5: "Speed Wins" — The Department of War's AI Strategy Memo

What happened: On January 9, 2026, the Secretary of War issued a memorandum directing the Department of War to become an "AI-first" warfighting force. The document reveals the clearest articulation yet of the federal government's "speed over governance" mentality.

The key directives:

"We must accept that the risks of not moving fast enough outweigh the risks of imperfect alignment."

30-day model deployment: CDAO must establish delivery cadence enabling the latest AI models to be deployed within 30 days of public release

"Barrier Removal Board": Monthly meetings with authority to waive non-statutory requirements

Model objectivity benchmarks: Procurement criteria requiring models "free from usage policy constraints" for "any lawful military applications"

Seven "Pace-Setting Projects" including "Swarm Forge," "Agent Network," and "Ender's Foundry"

The explicit philosophy:

"Speed Wins. We must internalize that Military AI is going to be a race for the foreseeable future, and therefore speed wins. We must weaponize learning speed."

🧠 The Jeneba Lens:

This memo is AI slop at best. The purest expression of the governance gap I've been warning about.

On one hand, I understand the competitive pressure. AI-enabled warfare will redefine military affairs over the next decade. The US needs to maintain a tech advantage.

But here's what’s wrong: The memo explicitly states that "the risks of not moving fast enough outweigh the risks of imperfect alignment."

This is the same logic that created the Moltbot scandal, but instead at the federal level, with nuclear-armed AI-enabled weapons systems.

The memo mentions "Responsible AI" exactly once, in a section titled "Out with Utopian Idealism, In with Hard-Nosed Realism." The reframe? AI models should be free from "ideological tuning" and "usage policy constraints."

What's missing from a 6-page strategy document about AI-first transformation?

Human-in-the-loop requirements for autonomous systems

Accountability frameworks for AI-enabled decisions

Bias testing and fairness requirements

Civilian oversight mechanisms

Whistleblower protections

The pattern I keep seeing: When organizations prioritize velocity over validity, they accumulate governance debt that eventually leads to a disaster. The question isn't whether this approach creates risk. The question is who bears the consequences when it fails.

Source: Secretary of War Memorandum: Artificial Intelligence Strategy for the Department of War, January 9, 2026

✊🏾 Black Innovation Spotlight -

Photo Credit: Vertus Partners; https://www.linkedin.com/pulse/influential-black-tech-innovators-over-years-vertus-partners/

Black History Month Edition

Black innovation gets the luxury editorial treatment it always deserved.

A new thought piece is circulating that asks the uncomfortable question: Will the U.S. repeat historical exclusion of Black innovators as AI reshapes economic growth?

The argument: If Black founders aren't centered in the AI economy now, we risk recreating the same exclusionary patterns that defined previous technological revolutions. Inclusion isn't just equity work it's how you get stronger innovation and broader economic benefit.

Generative AI as Equalizer

Tools like Amazon's generative AI are increasingly recognized for enabling Black entrepreneurs to access advanced technology infrastructure. The barrier to entry for building and scaling AI-powered apps and sites has never been lower and Black founders are moving fast.

The Signal: Black Founders Are Deploying AI Where It Matters Most

Black entrepreneurs are showing out with AI being deployed across verticals that mainstream tech often ignores: healthcare, education, fintech, sustainability, and consumer personalization. Eight Black entrepreneurs to watch now.

Here's who I'm watching:

Abdoulaye Diack /

He announced Waxal Dataset, a groundbreaking open-source initiative aimed at bridging the digital divide for African languages. Google and its partners have released a massive, open-source speech dataset featuring nearly 2 million records across a wide array of African languages (including Hausa, Yoruba, Amharic, Swahili, and many more).This is a foundational move for AI Sovereignty in Africa. It moves the continent from being just a consumer of AI technology to a creator and a builder of it, using data that accurately reflects its own linguistic diversity.

Finally AI in African languages:

Massive Scale & Accessibility: Nearly 2 million speech records released under a permissive license (CC BY 4.0), allowing startups and researchers to use the data for commercial products.

Empowering Local Innovation: By providing the "fuel" (data) for AI, this enables the creation of voice assistants, translation tools, and services in native African languages.

African-Led Execution: The project was a "masterclass in resourcefulness," involving African institutions that built their own recording studios and custom apps to collect the data.

Sustainable Ecosystem: The project is transitioning to the Masakhane African Languages Hub, ensuring the mission continues through local community ownership and future RFP

Resources mentioned:

Dataset: Available on Hugging Face

Collaborate: Masakhane Hub

Candace Mitchell / MyAvana AI-powered beauty tech using machine learning for personalized hair care insights. Solving the textured hair gap that mainstream beauty tech keeps missing.

Signvrse / Terp 360 African startup with Black leadership building AI-powered sign language translation. Real-time AI combined with 3D avatars to expand accessibility for the deaf community.

Yinka Iyinolakan / CDIAL.AI Innovating with AI models tailored for indigenous languages — addressing gaps in digital representation and localized AI utility across Africa.

The Ecosystem: Where the Support Lives

Black in AI continues expanding its reach in research, policy advocacy, and entrepreneurship support — building infrastructure that directly serves Black AI researchers and builders.

Black Founders Network (BFN) remains a key inclusive community connecting founders to mentorship, capital access, and entrepreneurial resources across venture stages.

DMZ Black Innovation Summit awarded a record ~$400K in funding to Black-led startups in late 2025, showcasing expanding capital flows into underrepresented founder ventures.

The wins are real. So are the gaps.

But long-standing funding inequities persist. Historical analysis shows Black founders receive a disproportionately small share of venture capital relative to the value they deliver.

The pattern: Black innovation isn't waiting for permission. But the ecosystem still has work to do.

I’ve always said AI will be the great equalizer for us.

🧠 Pretty Minds On AI

Where the art, psychology, style and taste of AI live.

We're entering an era where AI can generate at infinite speed. But generation isn't the bottleneck anymore.

Judgment is.

The ability to:

Know when AI output is "good enough" vs. dangerous

Distinguish between clear train of thought and quality thinking AND AI Slop

Decide what to verify, what to question, what to reject

Apply taste, context, and ethics that the model doesn't have

This is why I built the Think Before You Prompt™ framework. Not because AI is bad, but because speed without judgment is dangerous.

The people who will thrive in this era aren't the fastest prompters. They're the ones who know when to slow down.

Enter: T.H.I.N.K.™ is the discipline of preserving human authority, clarity, and judgment before delegating intelligence to a machine.

T.H.I.N.K.™

Target the outcome.

Hold the judgment.

Input with intention.

Name the task.

Keep that same human energy — human in the lead.

I design and deliver human-led AI literacy frameworks, training, and enablement programs that help organizations use generative AI strategically, responsibly, and with preserved human judgment.

One of my signature frameworks, Think Before You Prompt™ (T.H.I.N.K.™), teaches creative leaders the cognitive discipline required to use AI as a strategic thought partner, not a shortcut or decision-maker.

I’m going to be rolling this framework out later this year, and I’ll be available for workshops and advisory to teach it.

I don’t teach people how to prompt faster.

I teach them how to think clearly—so AI actually works.

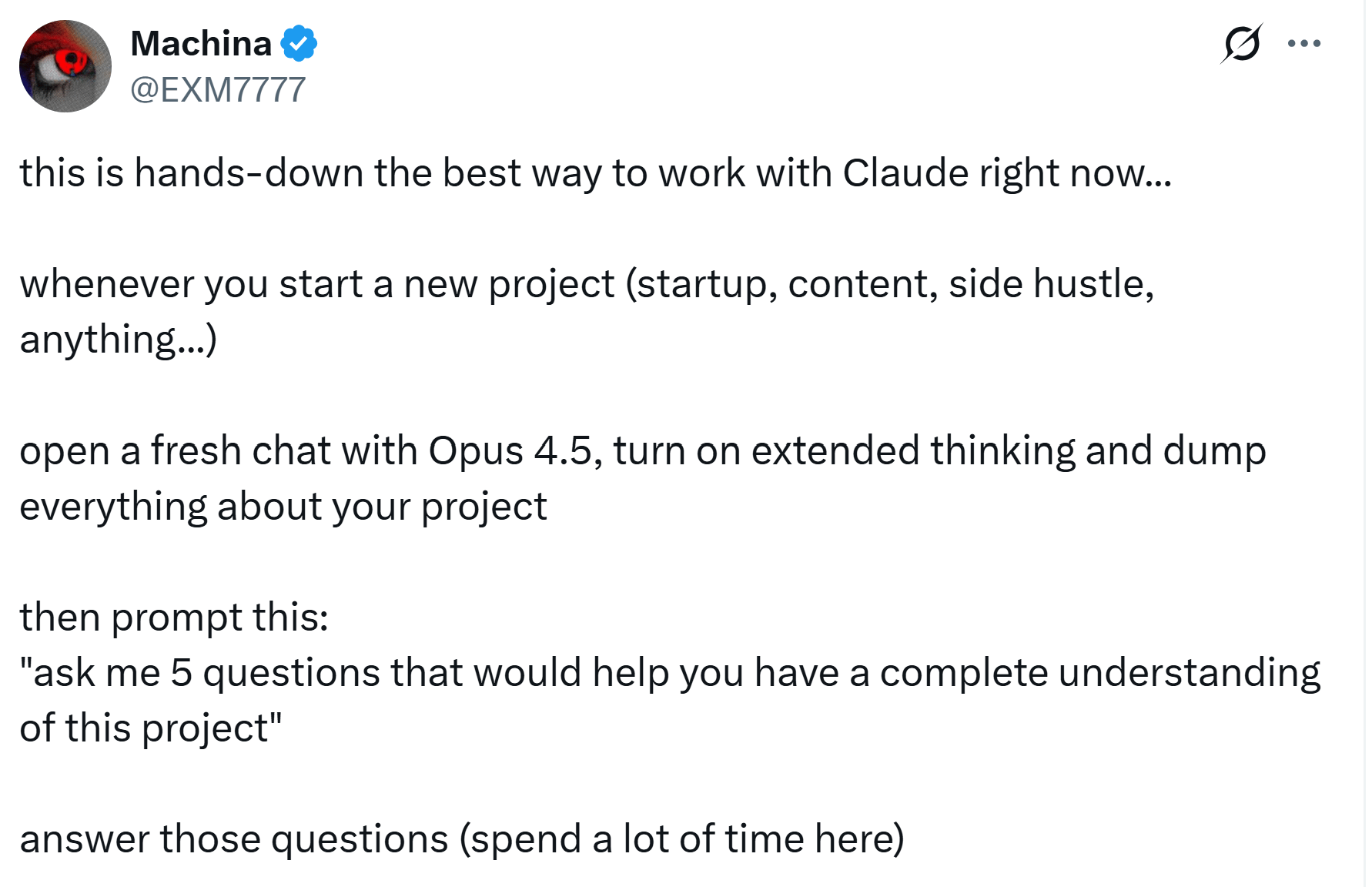

Prompt Literacy in Action: The "Co-Founder" Method

This prompt pattern from @EXM7777 on X is one of the best examples I've seen of thinking with AI instead of just prompting AI:

The Setup:

Open a fresh chat with Claude (Opus 4.5 with extended thinking). Dump everything about your project — startup, content, side hustle, anything.

Step 1: Let AI ask the questions

"Ask me 5 questions that would help you have a complete understanding of this project."

Step 2: Answer deeply (this is where the magic happens)

Spend real time here. Don't rush. Your answers become the context that makes everything else work.

Step 3: Elevate AI to thinking partner

"You're my co-founder. Create a master plan with a knowledge base directory for each section. Attach context markdown files to every part. You're building the skeleton that Claude Code will use to build everything 10x faster. Suggest skills to integrate, automations to build, or any tool that'd 10x our speed."

Why this works:

Most people prompt AI like a vending machine: insert request, receive output.

This pattern treats AI like a thinking partner:

You provide the raw context (the messy, unstructured reality of your project)

AI asks clarifying questions (forcing you to articulate what you actually know and don't know)

You answer with depth (the thinking happens HERE, not in the AI's response)

AI structures what you've co-created (turning your thinking into actionable architecture)

Notice: The AI isn't doing the thinking for you. It's creating the structure that makes your thinking usable.

The literacy takeaway: The best prompts don't happen by just hitting the “enter” key. They create the conditions for collaborative thinking. The "co-founder" frame changes the relationship from "task executor" to "thinking partner."

Source: @EXM7777 on X — He says, "this is hands-down the best way to work with Claude right now"

[ New Unlock ] WORKFLOW OF THE WEEK

WORKFLOW OF THE WEEK — Think Before You Prompt™

The Use Case: You're about to ask AI for something important — a strategy, a document, a decision framework — and you want the output to actually be useful, not generic slop.

The Stack: Any AI (Claude, ChatGPT, Gemini) + the Think Before You Prompt™ framework

The Thinking: Most people open an AI tool and start typing. That's like walking into a negotiation without knowing what you want. The quality of your output is determined by the quality of your thinking before you prompt not the cleverness of your prompt itself.

The Four Steps:

Step 1: Name Your Intent Before you prompt, answer:

What specific outcome do I need from this interaction?

Why does this matter? What decision or action does it support?

What does "done" look like? How will I know the output is good enough?

Step 2: Audit Your Knowledge Take inventory:

What do I already know about this topic from my own experience?

Where are my blind spots? What am I unsure about?

What constraints, context, or stakes does AI need to know?

Step 3: Apply Your Taste Define your quality bar:

What would make this output excellent vs. just acceptable?

What tone, style, or perspective should this reflect?

What do I NOT want? What would make this feel like AI slop?

Step 4: Plan Your Evaluation Before you see any output, decide:

What claims will I need to verify?

What will I add from my own expertise that AI cannot provide?

What's my plan for round two? How will I push back?

Try It This Week:

Before your next important AI interaction, spend 5 minutes answering these 12 questions (3 per step)

Include your answers in your prompt as context

Notice how much better the output is when AI knows your intent, constraints, taste, and evaluation criteria

The Literacy Takeaway: AI is a mirror. It amplifies what's already there. If you bring nothing, you get noise. If you bring clarity, context, and taste, you get strategic partnership.

💬 PROMPT OF THE WEEK — The Think Before You Prompt™ Generator

Use this prompt template to structure your thinking before any important AI interaction:

# Think Before You Prompt™

*Prompt crafted with intention, judgment, and taste.*

---

## My Intent

I need: [specific outcome]

This matters because: [decision or action it supports]

Success looks like: [how you'll know it's good enough]

---

## What I Bring (My Context)

What I already know: [your expertise on this topic]

Where I need help: [your blind spots or gaps]

Key constraints: [budget, timeline, audience, regulations, etc.]

---

## My Quality Standard

Excellence means: [what separates great from adequate]

Tone and style: [how it should read/feel]

Do NOT: [what would make this feel like AI slop]

---

## How I'll Evaluate Your Response

I will be evaluating your output critically — not accepting it at face value. Specifically:

- I will verify: [claims or assumptions you'll check]

- I will add my own expertise on: [what only you can provide]

- For round two: [how you'll push back or iterate]

Be rigorous. Flag your own uncertainties. Tell me where your reasoning is weakest. I'd rather have honest gaps than false confidence.Why this works: This prompt does three things:

Forces YOU to think before you prompt (the real value)

Gives AI the context it needs to actually help

Sets up a critical evaluation mindset, not passive acceptance

Want help me this?

I’m building a community for creatives and AI curious professioanls that want to learn what to do next with AI. What to use, What to build, and How to do it?

Here’s the wait list so you don’t miss out when I open doors next week: https://prompt-party-playground.lovable.app

📚 RESOURCES — Level Up Your AI Literacy

Tools I'm Using This Week

TLDW — Video Intelligence Stop watching hour-long videos for one insight. TLDW extracts the moments that matter — with timestamps, citations, and a chat interface to ask questions about any video.

Kimi Slides — AI Presentations Generate presentation-ready slides from your content. Clean design, logical structure, no more blank template paralysis.

TLDW — Stop Summarizing, Start Extracting

AI video summaries are solving the wrong problem.

Tired of skipping through YouTube videos when you only need the 5 minutes that actually change how you think.

Most AI tools compress videos. TLDW extracts the gems.

What it finds:

The breakthrough idea buried at 37:42

The surprising insight you would've missed

The quote you'll remember for years

What it eliminates:

Watching entire videos just for one insight

Skipping around aimlessly hoping to find the good part

Generic summaries that miss the point

What else you get:

AI chat that answers questions with exact video citations

Save notes on moments worth remembering

"Play All" mode to watch highlights back-to-back

Perfect for: Podcasts, interviews, conference talks, YouTube rabbit holes.

The literacy takeaway: The best AI tools don't just compress information — they extract signal. TLDW understands that your time is better spent on 5 transformative minutes than 60 mediocre ones.

Source: TLDW — h/t Superhuman newsletter for the recommendation

🎨 Kimi Slides — AI-Powered Presentations

If you're still building slides from scratch, meet Kimi Slides.

Upload your content, describe what you need, and Kimi generates presentation-ready slides. Clean design. Logical structure. No more staring at blank PowerPoint templates.

Best for: Quick pitch decks, internal presentations, turning documents into visual stories.

Source: Kimi Slides — Moonshot AI

Tools & Updates I’m Clocking

High Tea Time - By Jeneba

TLDW — Video Intelligence Stop watching hour-long videos for one insight. TLDW extracts the moments that matter — with timestamps, citations, and a chat interface to ask questions about any video.

Kimi Slides — AI Presentations Generate presentation-ready slides from your content. Clean design, logical structure, no more blank template paralysis.

TLDW — Stop Summarizing, Start Extracting

AI video summaries are solving the wrong problem.

Tired of skipping through YouTube videos when you only need the 5 minutes that actually change how you think.

Most AI tools compress videos. TLDW extracts the gems.

What it finds:

The breakthrough idea buried at 37:42

The surprising insight you would've missed

The quote you'll remember for years

What it eliminates:

Watching entire videos just for one insight

Skipping around aimlessly hoping to find the good part

Generic summaries that miss the point

What else you get:

AI chat that answers questions with exact video citations

Save notes on moments worth remembering

"Play All" mode to watch highlights back-to-back

Perfect for: Podcasts, interviews, conference talks, YouTube rabbit holes.

The literacy takeaway: The best AI tools don't just compress information — they extract signal. TLDW understands that your time is better spent on 5 transformative minutes than 60 mediocre ones.

Source: TLDW — h/t Superhuman newsletter for the recommendation

🎨 Kimi Slides — AI-Powered Presentations

If you're still building slides from scratch, meet Kimi Slides.

Upload your content, describe what you need, and Kimi generates presentation-ready slides. Clean design. Logical structure. No more staring at blank PowerPoint templates.

Best for: Quick pitch decks, internal presentations, turning documents into visual stories.

Source: Kimi Slides — Moonshot AIWhat I Want to Hear From You

Agency > Automation

This is the principle I keep coming back to, and my thesis on AI:

"Never outsource your agency for agentic productivity promises. The goal isn't to see how much work you can offload to a bot. It's to see how much human judgment, meaning, and taste you can amplify with AI."

What I Want to Hear From You?

What are YOU paying attention to right now?

Hit reply and tell me:

What AI story do you think deserves more attention?

What prompt or workflow would you like me to break down?

What question do you wish someone would answer?

Your responses shape this newsletter. I read every single one.🤞🏾

Until then, stay curious. Stay critical. Think before you prompt.

HIGH TECH TEA Where creative, aesthetic, and cultural intelligence meet responsible Black innovation

Let's build the future with intention.

— Jeneba